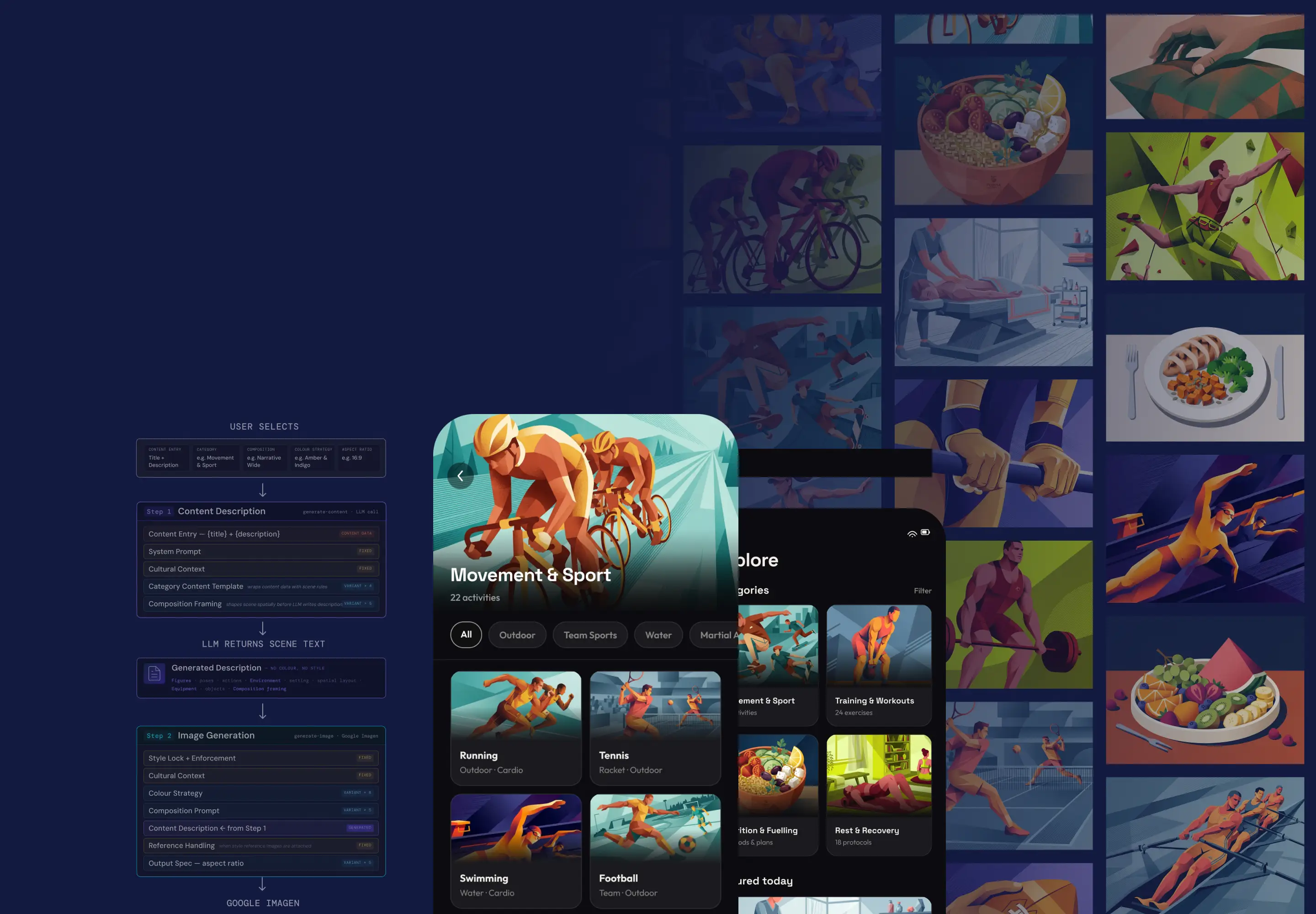

AI Illustration System

Designing and building a scalable AI image generation system for use in a client app — from prompt architecture to working tool

Summary

Context & Challenge

The real problem wasn't generating good images. It was generating 200 of them.

Key Decision (1/4)

Separate content from style — completely.

Key Decision (2/4)

Build a variant system — not just a style.

Key Decision (3/4)

Build the tool — don't hand it off.

Key Decision (4/4)

Debug the style systematically — one layer at a time.

Outcomes & Impact

“Would have you do a million more.”

When I felt confident enough in the system to share with the client, I produced a deliberate first batch: 54 illustrations across all content categories. Internally, we calibrated our expectations — fifty percent acceptable would have felt like a reasonable starting point. We weren't sure what to expect.

The results: 21 strong, 19 acceptable, 14 poor. A 74% strong-or-acceptable rate on the first proper client review. Critically, almost none of the failures were style failures — the consistency was holding. The poor results were concentrated in edge cases: abstract content that was hard to describe visually, or very specific compositional details (hand positions, object placements) that are genuinely difficult to control precisely through prompting.

The project demonstrated something I think matters beyond this specific brief. A designer doesn't have to wait for a developer to build the tool they need to test an idea. The feedback loop that used to take weeks can compress into days. That changes what's worth attempting — and it changes what a designer can contribute, not just to the brief, but to the solution.

Once the system was stable, I designed the full production workflow and trained a mid-weight designer to operate it independently — tool use, client approval rounds, feedback collation, and sign-off.

74%Strong-or-acceptable on first client review

150+On-brand illustrations delivered at scale

“

I really love these illustrations... Would have you guys do a million more but my Co-founder would fire me.”

C

Co-founderClient App